Over the past few years, I have been working with Dr. Agnes d’Entremont and Dr. Negar Harandi at the University of British Columbia in developing and deploying online homework questions in engineering subjects using the WeBWorK open online homework system.

Post by Jonathan Verrett, 2018–2019 BCcampus Research and Advocacy Fellow, Instructor, Chemical and Biological Engineering, University of British Columbia

Online homework systems are increasingly being used in many disciplines to support student learning outside of the classroom. These systems are particularly helpful with large classes, where they can give students immediate feedback and focus instructor time on more challenging concepts or problems (i.e., things that cannot be easily machine graded, such as explanations of solution processes or technical diagrams). These homework systems come with a variety of features, with some better suited for certain question types or disciplinary contexts. I’ll focus in this article on questions using mathematical formulas, which may be commonly used in disciplines related to engineering, science, mathematics, technology, economics, and statistics, to name only a few.

These mathematical formula questions, in their simplest form, generally have a certain problem statement where a variable is randomized within a certain range. This means that each student will get the same question text, but with unique numbers for solving the problem. There are a variety of open homework systems, including WeBWorK, LON-CAPA, and IMathAS. In this article, I’ll focus on WeBWorK, but from my limited knowledge of other systems, they seem to have similar capabilities.

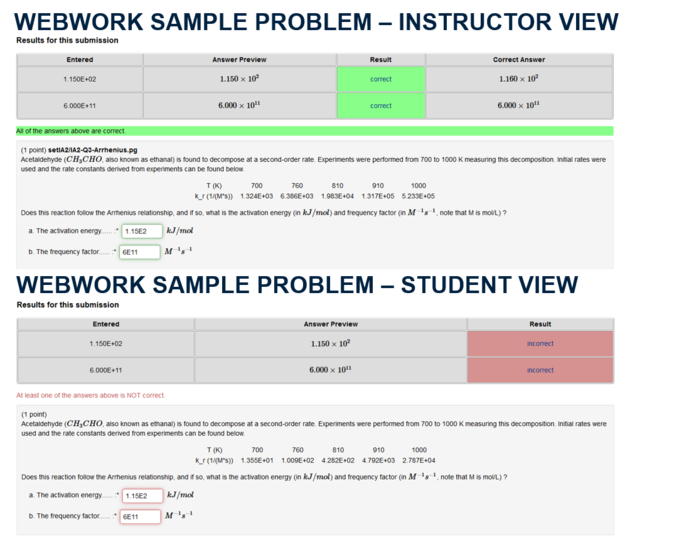

You can see two screenshots from a WeBWorK problem I use in one of the courses I teach below. The first screenshot shows the view I see of a problem as an instructor, and the second is from the student’s side of WeBWorK. As an instructor, I can choose to see the correct answers in the instructor view, whereas the students only see feedback as to whether their answer is correct or incorrect. You can also see in the instructor view that the solution entered and the correct answer are not exactly the same. A tolerance can be set by the problem designer, either as a percentage or absolute value, when comparing the entered answer to the correct answer. This allows for intermediary calculations if this is appropriate for the subject matter.

In my classes, I’ve used WeBWorK to encourage students to work together and share problem-solving approaches rather than solutions. This works reasonably well, since the numerical answer is different for each student. So a student can’t just tell a colleague the answer is 5; they have to explain their approach. There are, of course, ways around this (e.g., sharing a spreadsheet), but this tends to catch up to students when they get to solving problems individually in exams. This is also mitigated by keeping WeBWorK as a relatively low percentage of the overall course grade (say, under 5 per cent). I can also choose the number of attempts to give students, and generally, I set this to unlimited, meaning students can make as many attempts as they like before the deadline. I want students to be able to try and get feedback at this stage in their learning, this frames the use of WeBWorK as a learning process (formative) rather than a testing tool (summative). Although you can also limit attempts if desired depending on how you might use WeBWorK in your course.

WeBWorK also supports many other problem types and features. Some other notable problem types include answers with variables in them (useful, say, in differentiating or integrating a function) as well as the creation of dynamic graphs (I’ve never used this, but I’ve heard it can be quite powerful).

The Engineering WeBWorK Project at UBC

When this project started, Dr. Agnes d’Entremont (Mechanical Engineering or MECH), Dr. Negar Harandi (Electrical and Computer Engineering, ELEC) and I (Chemical and Biological Engineering, CHBE) were originally using WeBWorK largely independently of one another at UBC. We came together to try to see where implementing WeBWorK would have the most impact and found that there were many overlaps in engineering disciplines at the second-year level. Taking fluid mechanics as an example, the course is taught at UBC in MECH, Civil Engineering (CIVL) and CHBE. Many topics in these courses are similar, with the exception of a few unique problems or perspectives from each discipline. As such, we focused on finding overlap in disciplinary content as well as instructors willing to use and support WeBWorK question development.

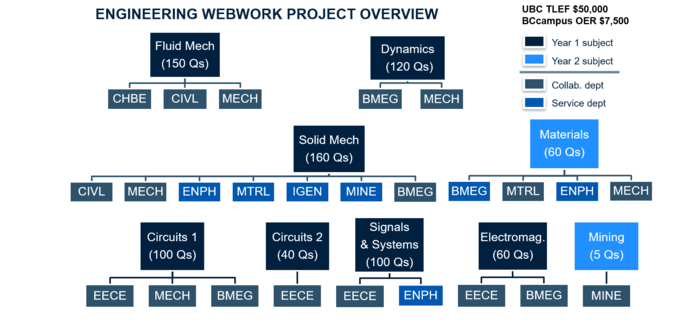

We started the project in the summer of 2018. The below graphic summarizes the subject areas we worked on in Year 1 of the project and the engineering programs that this had an impact on. For example, you can see from the graphic that solid mechanics (Solid Mech) is taught in CIVL, MECH and Biomedical Engineering (BMEG), and that students in Engineering Physics (ENPH), Materials (MTRL), Integrated (IGEN), and Mining (MINE) Engineering programs also take these courses as service courses. At the time we rolled our first question sets out in fall 2018, UBC had 12 engineering programs with second-year courses (some of which were in the same department, e.g., electrical engineering and computer engineering). Our project impacted students in all these programs, representing around 900 engineering students. Many of these students were using WeBWorK in more than one of their classes. We’ve since expanded to a few other subjects for this most recent year (shown in the graphic as Year 2).

If you’re curious about how we developed these problems, we have a paper coming out describing this project in the 2019 Canadian Engineering Education Association Proceedings. That publication should be available in spring 2020, but until that time, we also have prior publications on WeBWorK’s impact on students and instructors. In 2018, Agnes published an article on student preferences in using single-box and multi-box question sets, and I published an article with a teaching assistant (Jun Sian Lee) on the impact of WeBWorK use on students and instructors. In 2017, Agnes, along with colleagues Patrick Walls and Peter Cripton, published an article on implementing WeBWorK in second-year mechanical engineering and the student feedback associated with this.

Sharing Problems

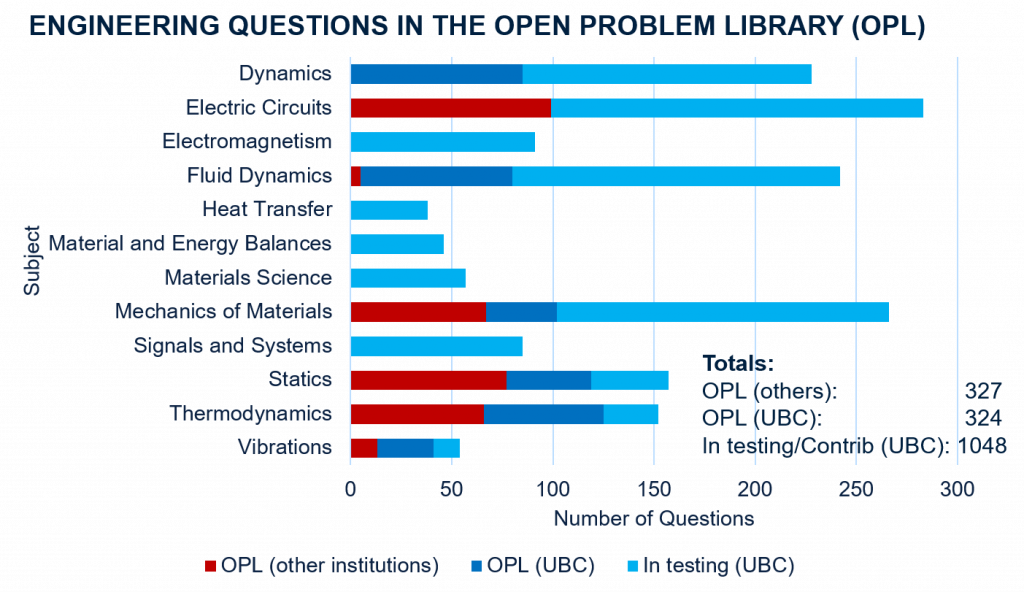

One of the powerful features of WeBWorK is the structure that it has for sharing problems. This is called the Open Problem Library (OPL). It is hosted on GitHub, and users from a variety of institutions can and do contribute problems in a variety of subject areas. When we started our project, the OPL had over 35,000 questions. However, these were mainly in mathematics. There were only two engineering subjects with around 200 questions.

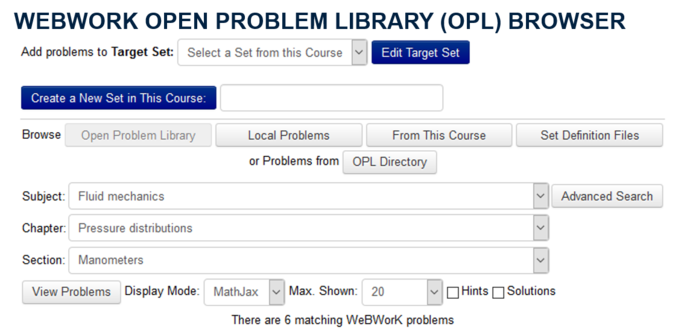

The OPL is organized into a three-tier taxonomic structure. Questions submitted need to be categorized with a subject, chapter, and section so they can be easily found by other users. Each instance of WeBWorK has a relatively simple interface for searching through the OPL for questions (I show a screenshot of this below for reference). Since questions need to be categorized, the taxonomic structure for a subject must be in place before questions are accepted into the OPL.

Since starting the project, we’ve developed new taxonomies for five subjects, as well as revised one of the existing engineering taxonomies to split it into two distinct subjects. You can see a summary of all subjects we’ve worked on and the number of questions our project has developed in the graphic below. We’ve also been able to categorize problems created by other WeBWorK users into the subject taxonomies we have made, and this has increased the problems contributed from other users from the original 200 to over 300 problems. Problems submitted to the Open Problem Library should be tested in at least one course iteration, and some of the problems we are developing are still in this testing phase, as you can see in the graphic. You can find the problems we at UBC have developed—even those not yet moved into the OPL—on GitHub.

One neat thing that we’ve been able to do is convert problems given to us by collaborators from other homework systems using automated scripts. We’ve converted 41 statics problems given to us by Eric Davishahl at Whatcom College, which were originally in the IMathAS language. We’ve also converted 190 fluid mechanics problems from Bryce Hosking, Jon Pharoah,and Rick Sellens at Queen’s University, which were originally written for Desire2Learn. For those physicists out there looking to use WeBWorK, folks at Brock University have coded problems from the OpenStax Physics textbook. These questions aren’t yet in the OPL, as there is still a need for taxonomies for physics subject areas, but perhaps some enterprising faculty would be willing to create taxonomies.

Our plan with the project is to continue to support development and use of problems at UBC, and to continue to expand the WeBWorK OPL taxonomies for engineering subjects. If you’re interested in using WeBWorK, contributing problems or collaborating, or just have questions, feel free to reach out to me by email at jonathan.verrett@ubc.ca.

Acknowledgements

The engineering WeBWorK project at UBC is co-led by Dr. Agnes d’Entremont, Dr. Negar Harandi, and me (Dr. Jonathan Verrett). I would like to acknowledge that this work was completed on the traditional, ancestral, and unceded territory of the Musqueam people. I would also like to acknowledge the financial support for this project provided by UBC Vancouver students via the Teaching and Learning Enhancement Fund, as well as additional funding support from BCcampus, the UBC Department of Mechanical Engineering, and the UBC Applied Science Dean’s Office.

Notable quote

“WeBWorK is one of the platforms BCcampus is exploring as part of Open Homework Systems (OHS) project due in no small part to the work done at UBC. Dr. d’Entremont, who worked closely with Dr. Verrett and Dr. Harandi on this UBC project, is also a member of the BCcampus Open Homework Systems Advisory Group.” – Clint Lalonde, Project Manager, Open Source Homework Systems

Learn more:

- BCcampus Open Homework Systems Advisory Group

- Early thoughts on the BCcampus open homework systems project